After the BliKVM PCIE failed to work at all, I decided to try one of the new little IP KVM units out there. I went with the JetKVM because its main distributor isn’t AliExpress (after the BliKVM didn’t work at all), and seemed to be pretty high quality on top of that. I wanted to set it up to have the ability to remote power on my NVR as well as to monitor it.

Continue reading “JetKVM”Updating the Webserver to Ubuntu 24.04

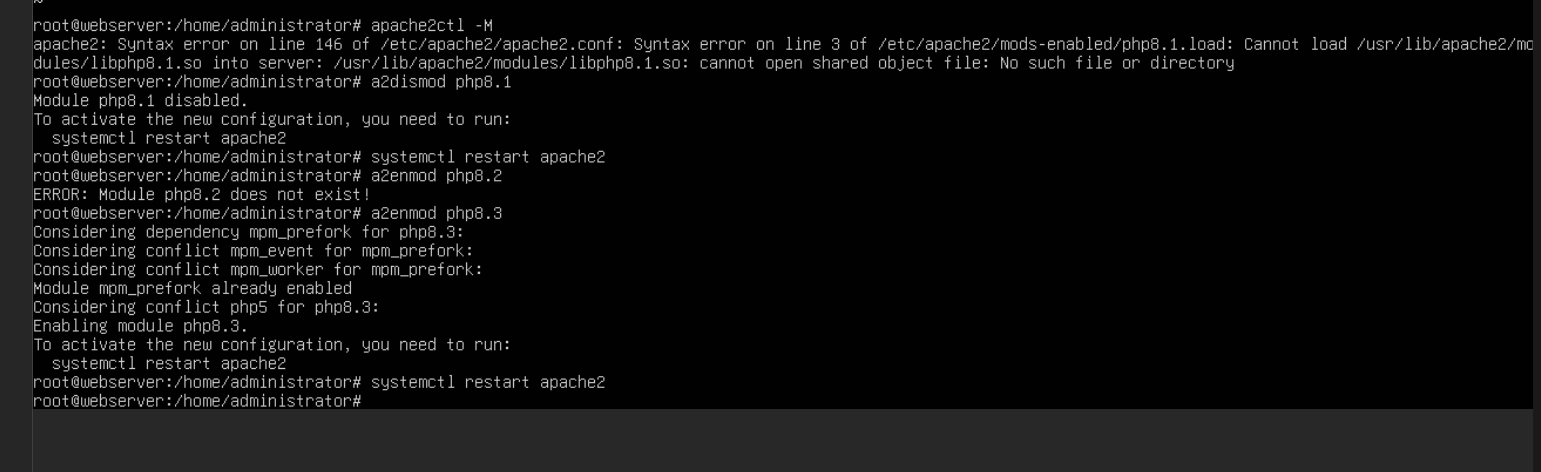

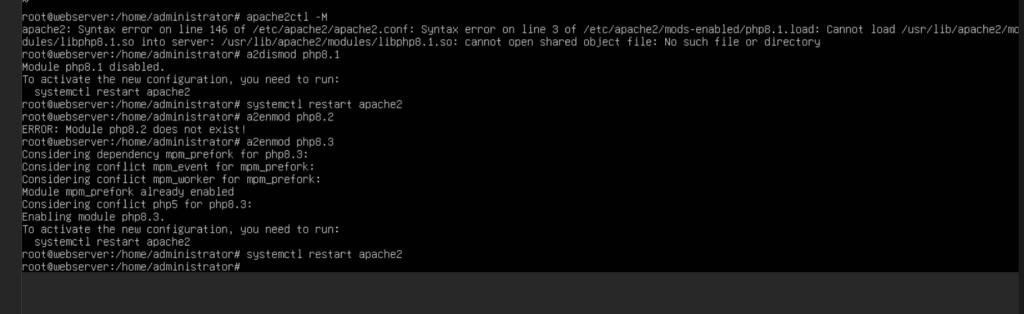

I upgraded my webserver to Ubuntu 24.04 LTS recently and ran into an odd little issue. Apache2 refused to start up due to a PHP plugin failing to load. This was specifically PHP 8.1 failing to load as it was replaced in the repos with 8.3. This ended up having a quick fix to get things in order.

All I needed to do was disable the old PHP mod and enable the new one. I used this reference for which versions would be supported in 24.04 to enable the correct version. Overall a nice quick fix and my webserver was back operational

> sudo a2dismod php8.1

> sudo systemctl restart apache2

> sudo a2enmod php8.3

> sudo systemctl restart apache2

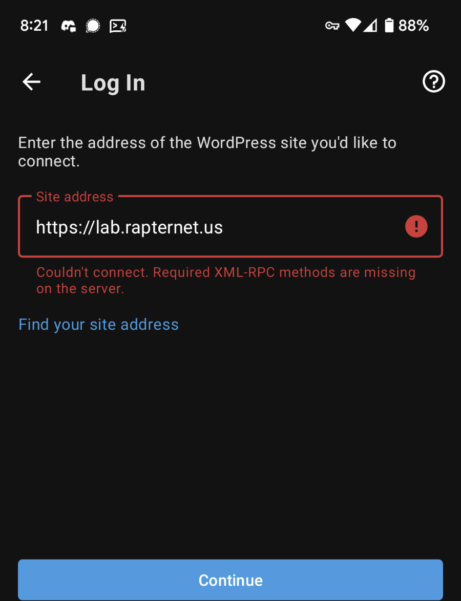

Fixing the WordPress App Connection to my site

It started out with the WordPress app stopping working for me on my phone. This happened a few months back as well and it stayed broken for a few months before it started working again. This time around I was rather very annoyed and wanted to figure out what was going wrong and fix it. In the end it came down to XML-RPC and an “optional” package in the WordPress health check.

Continue reading “Fixing the WordPress App Connection to my site”Upgrading the Network Video Recorder

I’ve been using a small 1U server (Running an E3 V2 CPU) to run Blue Iris for a few years now, and in an attempt to find power savings in my electric bill (and subsequent AC savings too) I decided to do some digging for a replacement. I gathered some metrics on the old server and found it using 80-100 watts, so I was aiming to reduce that by 50%. I ended up finding a cute little 2 bay “NAS” online that supports a low power AMD CPU and can handle the HDD space I need for the video recordings.

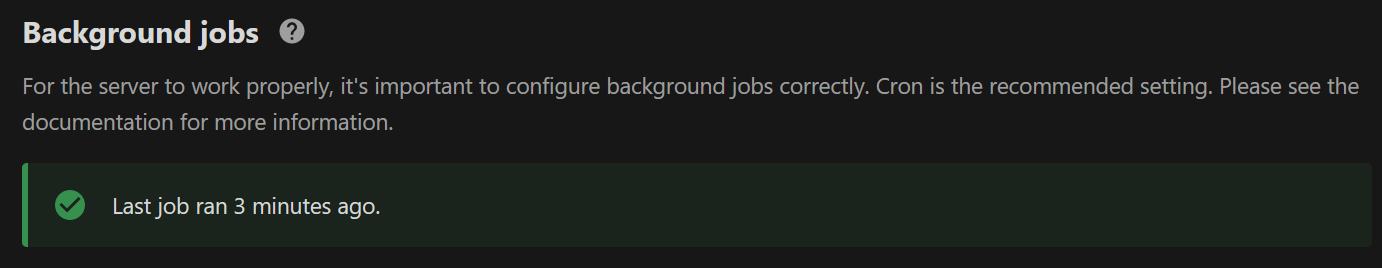

Continue reading “Upgrading the Network Video Recorder”Upgrading my NextCloud to use Redis and fix Cron

I’ve had NextCloud installed for a while now and part of my core infrastructure, however it hasn’t been running well. I’ve always had issues getting the cron jobs to run and occasional problems with file locking in it. Recently the file locking problems got worse and I finally decided to fix both issues in one hopefully straightforward action.

Continue reading “Upgrading my NextCloud to use Redis and fix Cron”Using ntfy.sh to send unRAID notifications

unRAID supports multiple notification platforms for keeping you informed about what the server is doing, the only problem is, I don’t use any of those notification platforms. I have however started using ntfy.sh, which has been working well for my home assistant notifications, and is a very simple platform. What I’d like to do is integrate it into unRAID so that I can make use of it there as well.

Continue reading “Using ntfy.sh to send unRAID notifications”UnRAID XFS MetaData Corruption

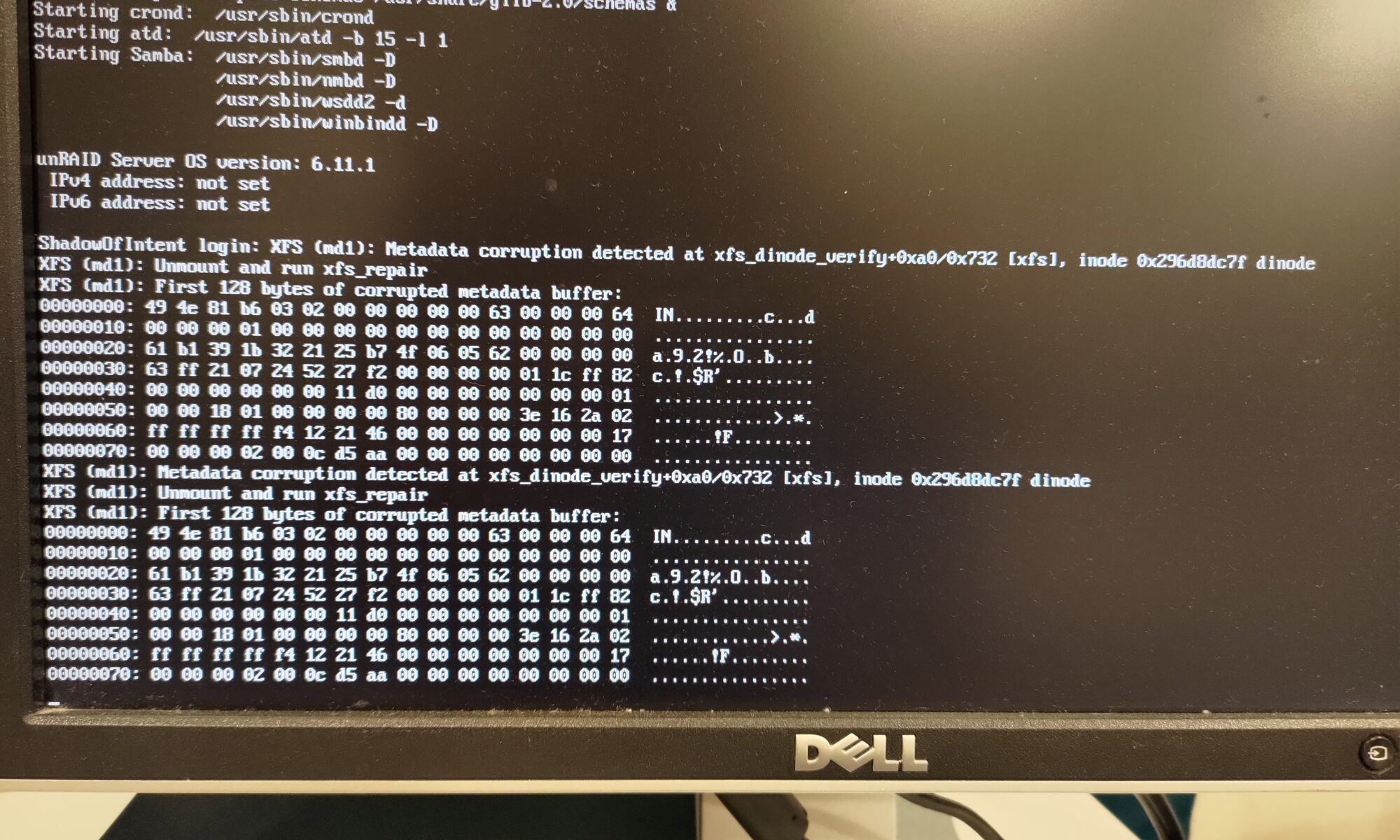

One thing you never want to see when you look at the screen for your file server is an error about corrupted data. Let alone after seeing read errors from one of your disks during a parity check. I recently had that sort of fun with my NAS.

Continue reading “UnRAID XFS MetaData Corruption”Cleaning up the docker registry

Working on my docker swarm recently I noticed that my storage was running low. After investigation, I found I had 17GB of usage by my docker registry. I have no need to have that many versions of my custom built containers on hand, so I went through the process of cleaning it up.

Continue reading “Cleaning up the docker registry”Setting up Giscus Comments on WordPress

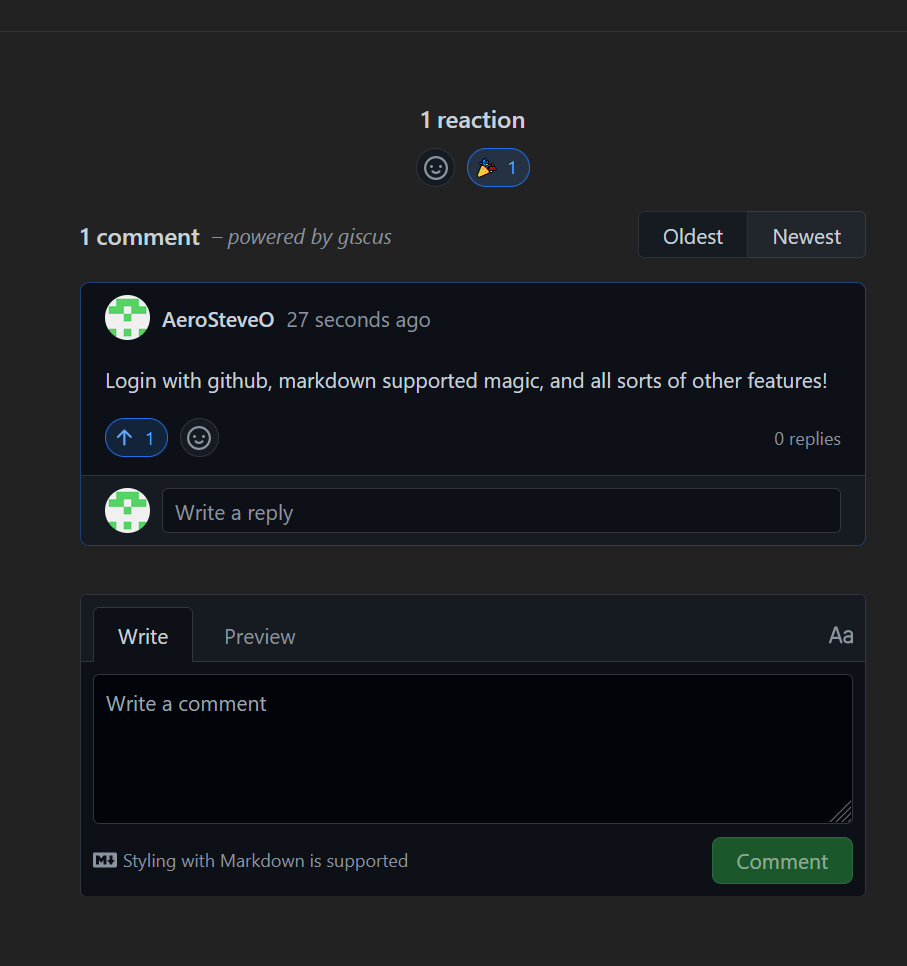

This blog was setup originally in 2016 and has never had a really useful comments section. There was a time when the only thing showing up were trackbacks to malicious websites. This wasn’t exactly a positive addition, so I disabled trackbacks and commenting to prevent any misleading comments. I decided to try and get a comments section back and operational as a part of my overhaul of my webserver (and having recently learned of Giscus for using GitHub accounts and backend for commenting). So here we have a guide for setting up Giscus comments on WordPress.

Continue reading “Setting up Giscus Comments on WordPress”Upgrading Ubuntu Host for Unifi Controller

My unifi controller was installed on an Ubuntu server back on 16.04 LTS. This server finally reached end of life and I needed to run an upgrade to Ubuntu 20.04 LTS. I decided to first try to just release upgrade it two steps. I’d tried this in the past and failed which is why it stayed out of date for so long, but maybe the upgrade process was fixed for things to work automagically. I was a bit wrong on that, however I also found out that rebuilding from scratch is pretty easy.

Continue reading “Upgrading Ubuntu Host for Unifi Controller”