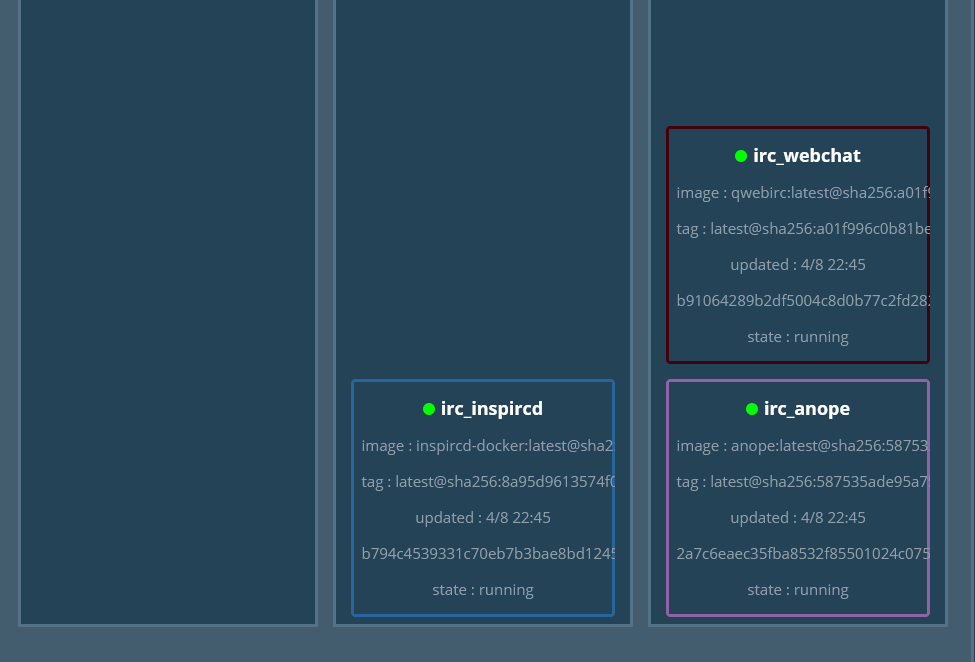

One of the first application stacks I went to install and setup on my new Raspberry Pi docker cluster was Inspircd/qwebirc/anope. This stack was running originally on a raspberry pi 1b (256MB RAM version). I wanted to move this off the pi1 since it was out of date and would need a complete reinstall to be back to full patch status. However shortly after getting it running in my swarm, I ran into issues.

The IRC server would restart every few hours, sometimes it would restart every 10 minutes or so. I deemed that as unacceptable on my basic setup even for just using it in development of IRC bots.

Continue reading “Disabling the Aggressive Inspircd Health Check”