I have watchtower setup on my Unraid server to handle automatically updating all my docker containers. This is quite convenient, however it does come with some dangers. For instance, my InfluxDB instance recently updated to version 2. This version of influx has a brand new query language, authentication system, and much more. This also breaks all compatibility with my Telegraf, Unifi-Poller, Grafana, and other services. Instead of trying to revert to an older version and refuse the slow change of technology, I decided to just start stepping up services to work with the new version. So I know get to introduce myself to InfluxDB V2 and then to move on to stepping up services to use it.

Continue reading “Intro to InfluxDB V2”Sending Notifications to Phones from Home Assistant

As I build out my smart home systems, I have realized that I wanted to be able to push notifications from it to my phone. I have other services that do this through Email, so I wanted to set up something similar with home assistant. I have a list of a few things I would like notifications of, and getting one or two of them off the list will at least prove out my implementation and give me some more capability from my home assistant setup.

Some things to notify me of

- water leaks

- washing machine done

- chest freezer without power

Setting up Z-Wave in Home Assistant on Proxmox

Recently I have ended up with 2 Z-Wave devices in my home, and while the devices work just fine without it enabled, I wanted to mess around with them in home assistant. I’ve seen lots of information on Z-Wave and Zigbee devices and sensors and had been looking at getting some anyway, so I used this as a reason to jump in.

Since I run Home Assistant on a VM via Proxmox, my setup will end up being a bit different than the usual “just plug in the USB Z-Wave controller and go” for those running Home Assistant on a Raspberry Pi or NUC.

Continue reading “Setting up Z-Wave in Home Assistant on Proxmox”NextCloud “Maintenance” Mode Error Resolution

For a while my NextCloud server decided to stop allowing my phone or desktop to upload to it due to it being in “maintenance mode”, however I could log into the webui with no problems, and nothing in the logs showed it being in maintenance mode. This started to frustrate me as I could find nothing to signify why my clients thought the server couldn’t be used. I tried a number of things:

- Clearing out the file locks table (there were 600,000 entries in it, and I’m the only user on the server)

- occ db:add-missing-columns

- occ db:add-missing-indices

- occ db:add-missing-primary-keys

What finally worked: - occ files:scan –all

Finally with the files:scan –all it started working again and my app was able to start uploading to the server. If you have a NextCloud server and have been running into “maintenance mode” errors when your server is not in maintenance mode, this is certainly worth trying.

Some GitHub Issues worth checking:

Time Lapse 3d print

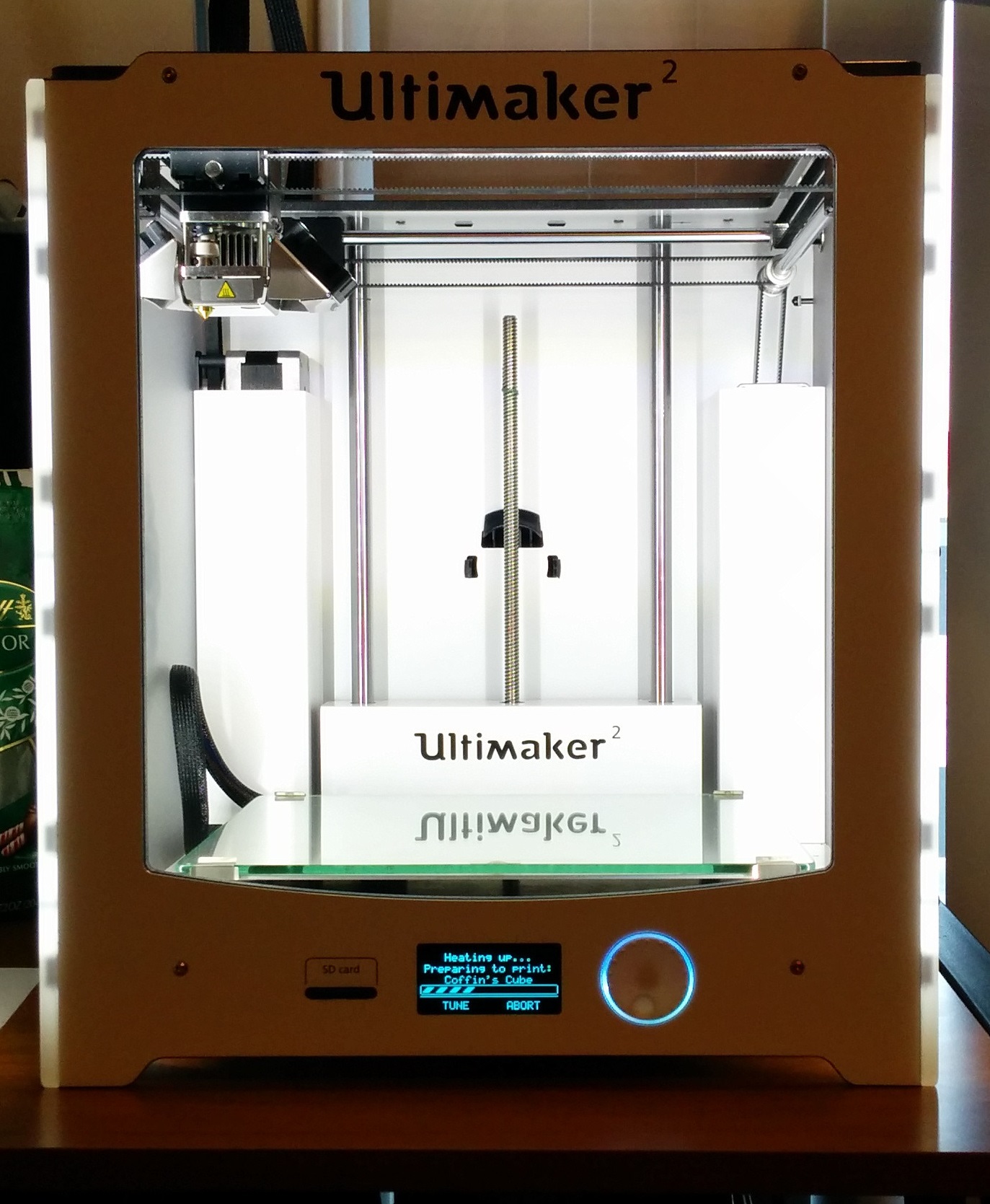

I wanted to create a time lapse of a 3D print however I wasn’t sure the best way to do this. I’ve made time lapses using octoprint before, but for that to work, octoprint needed to be the machine driving the printer. I tend to prefer running my Ultimaker from the SD card as I’ve forgotten what all my previous octoprint settings were to get it running as smoothly as the Cura settings from the card. I also wanted to work on a more generic way to create a time lapse.

Continue reading “Time Lapse 3d print”BlueIris and the No Signal Camera

I recently setup a few new cameras on my BlueIris box, however one camera in particular was giving me problems. I tried a few different configurations and each time I got a no signal error. I could change the IP to another camera and all was well, but this one in particular was angry at me. I could log into the web interface and view the feeds, so I knew it was working, it just had something up in the configuration. I ended up looking at the Blue Iris status UI to see that I was getting about 1.5FPS through with a slightly lower bitrate than the camera was configured to at the time. I updated the camera config to drop the bitrate and voila, signal, smooth video.

The root cause is something in the cable, either too much interference from AC wiring, or an end isn’t as well crimped as it could be. Either way, I got my camera up in my Blue Iris instance and things worked fine after that.

Apt Key Expired in Ubuntu

I was updating my boxes as usual when I encountered an error when trying to run updates on my unifi controller. This lives on a slightly older box (I tried upgrading it at one point and not all the dependencies were supported yet on the newer version), and I ran into an error when running the apt commands. One of the keys was expired for a component needed by the controller. So lets figure out how to update that key so we can update the box once more.

Continue reading “Apt Key Expired in Ubuntu”Calling HASS API from Various Shells

While working on making my macro pad trigger Home Assistant automations, I found the API would be the easiest way to integrate the systems. Here’s some of my experimenting with the API and how to call it from Linux curl and PowerShell Invoke-WebRequest

Linux Curl

Using the Linux curl command is pretty straight forward.

curl -X POST http://hass.local:8123/api/services/switch/toggle -H 'Authorization: Bearer ABCDEF' -d '{"entity_id": "switch.testsubject_test_subject"}'PowerShell Invoke-WebRequest

PowerShell is a bit more annoying to get everything right, as Invoke-WebRequest is a bit pickier on its input arguments.

Invoke-WebRequest -Method POST -Uri "http://hass.local:8123/api/services/switch/toggle" -H @{"Authorization"="Bearer ABCDEF"} -Body '{"entity_id": "switch.testsubject_test_subject"}'Apparently windows has a build of the official Unix curl and tar commands, but my PowerShell prompts all had curl as an alias to Invoke-WebRequest. I did find that if i call curl.exe, it would run the official curl command.

Conclusion

Do be careful when copying and pasting between shells, I found that copying out of the PowerShell prompt and back in would yield an invalid token. This was also encountered in the WSL terminal as well.

The API is pretty straight forward after a little bit of trying things out. The documentation covers the capability but doesn’t have as many examples as I would have liked otherwise.

Resources

WLED Automation for Roku in Home Assistant

I was able to get WLED going on a light strip but I do have a lack of ideas on how to make use of it. I finally decided to go with the tried and true movie lighting. So my goal is to setup automations so that when my Roku begins playing something, the WLED strip dims and sets to a specific color (we’ll go with red), and when I pause or stop playing, it will turn to max brightness white light. This will give lower lighting while watching and brighter lights if something is paused (and I have to go get something). I’m still not entirely sure if I will end up making use of this in the end, but it will give me some ideas on what I can do and how to do it.

Continue reading “WLED Automation for Roku in Home Assistant”NextCloud File Lock Error in Joplin

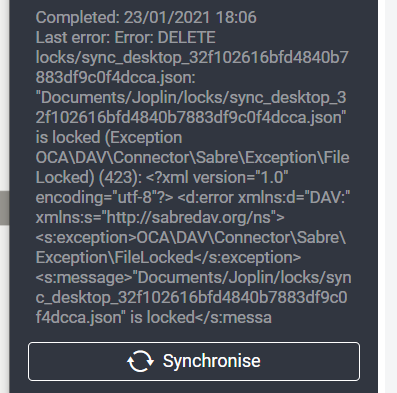

I encountered a problem recently on the Joplin desktop app where it couldn’t synchronize due to a file lock being stuck in NextCloud. It uses a file in the jopline data directories to determine sync status and lock status on files being edited, and this file was stuck locked in NextCloud with no way to delete it. I performed some searching, finding some blog posts on the subject and found this on how to solve it. I’ll be going through the manual solution to this problem and updating the process with my experience.

Continue reading “NextCloud File Lock Error in Joplin”